Normally, when we do Google SEO, we certainly hope that search engine spiders can crawl and index content on your website every day. However, if your server has resource limitations, Google spiders crawling your website too frequently may lead to server resource exhaustion or slow website loading. In such cases, we can consider appropriately reducing the search engine's crawl rate to ensure the website can be accessed normally and won't be overwhelmed by spider crawling.

Set Googlebot's Crawl Rate

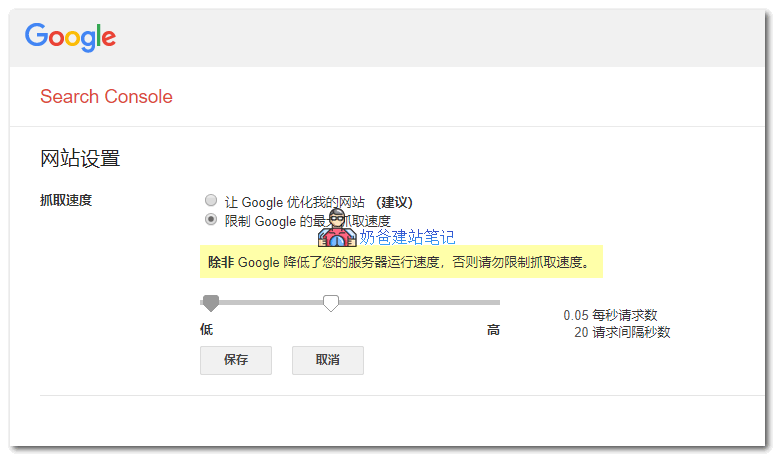

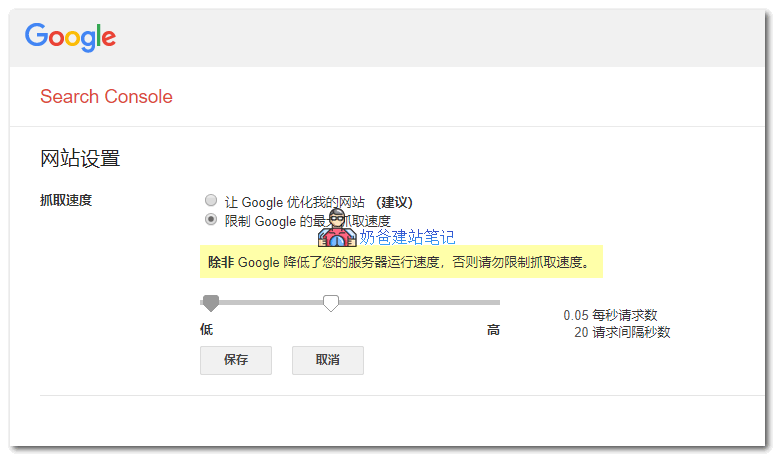

Google uses advanced algorithms to determine the optimal website crawl rate. Each time the Google search spider visits your website, it crawls as many pages as possible without overloading your server's bandwidth. If Google sends too many requests per second to a website, causing the server's speed to degrade, you can limit the speed at which Google crawls your site. You can limit the crawl rate for root-level websites (e.g., www.example.com and http://subdomain.example.com). The crawl rate you set is the maximum rate for Googlebot. Please note that Googlebot may not necessarily reach this limit. Unless you notice server load issues and determine they are caused by Googlebot accessing your server too frequently, we recommend not limiting the crawl rate. You cannot change the crawl rate for non-root-level websites (e.g., www.example.com/folder).

Specific Limitation Method:

Specific Limitation Method:Open the resource's

„Crawl Rate Settings“ page。

- If the crawl rate is „Calculated optimal rate“, the only way to reduce the crawl rate is tosubmit a special request. You cannot increase the crawl rate.

- Otherwise, select the corresponding option and limit the crawl rate as needed. The new crawl rate is valid for 90 days.

Set Crawl Rate for All Search Spiders

In addition to individual settings, you can also use the

robots.txt file's Crawl-delay directive to set the search engine crawl frequency. Most search engines support this

Crawl-delayparameter, which sets how many seconds to wait between consecutive requests to the same server:

User-agent: *

Crawl-delay: 10

You just need to add the above code to your website's robots.txt file and wait for search engine spiders to crawl and recognize it. Although it's rare to encounter a situation where spiders overwhelm a website, it does happen. For ordinary foreign trade independent websites, which don't have much content to begin with, there's no need for spiders to crawl resources frequently 24/7. After all, if the website speed is slowed down, it affects both SEO performance and user experience. So, when you find your website being crawled frantically by spiders, you can consider taking this action.

Related articles: Specific Limitation Method:Open the resource's„Crawl Rate Settings“ page。

Specific Limitation Method:Open the resource's„Crawl Rate Settings“ page。

Comments are closed

The comment function for this article is closed. If you have any questions, please feel free to contact us through other channels.