What is robots.txt

Robots.txt, also known as the robots protocol, is a widely accepted ethical standard in the international internet community. Robots.txt is a text file located in your website's root directory, used to inform search engines which pages can be crawled and which cannot. It can block some larger files on the website, such as images, music, videos, etc., to save server bandwidth; it can block some dead links on the site, making it easier for search engines to crawl website content; and it can set up sitemap links to guide spiders in crawling pages.How to create a robots.txt file

You only need to use a text editing software, such as Notepad, to create a text file named robots.txt, and then upload this file to the website's root directory to complete the creation. You can also userobots generation toolGenerate online.How to write robots.txt rules

Simply creating a robots.txt file is not enough; the essence lies in writing appropriate robots rules for your own website.robots.txt supports the following rulesUser-agent: * 这里的*代表的所有的搜索引擎种类,*是一个通配符 Disallow: /admin/ 这里定义是禁止爬寻admin目录下面的目录 Disallow: /require/ 这里定义是禁止爬寻require目录下面的目录 Disallow: /ABC/ 这里定义是禁止爬寻ABC目录下面的目录 Disallow: /cgi-bin/*.htm 禁止访问/cgi-bin/目录下的所有以".htm"为后缀的URL(包含子目录)。 Disallow: /*?* 禁止访问网站中所有包含问号 (?) 的网址 Disallow: /.jpg$ 禁止抓取网页所有的.jpg格式的图片 Disallow:/ab/adc.html 禁止爬取ab文件夹下面的adc.html文件。 Allow: /cgi-bin/ 这里定义是允许爬寻cgi-bin目录下面的目录 Allow: /tmp 这里定义是允许爬寻tmp的整个目录 Allow: .htm$ 仅允许访问以".htm"为后缀的URL。 Allow: .gif$ 允许抓取网页和gif格式图片 Sitemap: 网站地图 告诉爬虫这个页面是网站地图It is recommended to use the webmaster tool's robots generation tool to write rules, which will be simpler and clearer.robots generation tool

Naiba Tip: Tip: If Disallow: is not followed by a slash, it means allowing crawling of the entire site.

Recommended robots.txt rules for WordPress

After WordPress installation is complete, a virtual robots.txt rule file is created by default (meaning you cannot see it in the website directory, but you can access it via „网址/robots.txt") The default rules are as follows:User-agent: * Disallow: /wp-admin/ Allow: /wp-admin/admin-ajax.phpThis rule means that all search engines are prohibited from crawling the content under the

wp-adminfolder, but are allowed to crawl the/wp-admin/admin-ajax.phpfile. However, for website SEO and security considerations, Naiba suggests improving the rules further.Below are the current robots.txt rules for Naibabiji.User-agent: * Disallow: /wp-admin/ Disallow: /wp-content/plugins/ Disallow: /?s=* Allow: /wp-admin/admin-ajax.php User-agent: YandexBot Disallow: / User-agent: DotBot Disallow: / User-agent: BLEXBot Disallow: / User-agent: YaK Disallow: / Sitemap: https://blog.naibabiji.com/sitemap_index.xmlThe above rules add the following two lines to the default rules:

Disallow: /wp-content/plugins/ Disallow: /?s=*Prohibit crawling of the

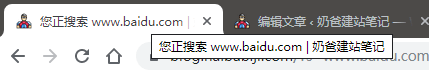

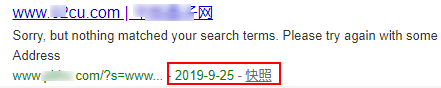

/wp-content/plugins/folder and web pages with the URL/?s=*./wp-content/plugins/is the WordPress plugin directory, to avoid privacy risks from being crawled (e.g., some plugins have privacy leakage bugs that could be crawled by search engines).Prohibit crawling of search result pages to prevent others from using them to boost ranking:Web pages with the URL/?s=*, which is also a bug recently discovered by Naiba being exploited by SEO gray hat projects./?s=*URL is the default search results page for WordPress websites, as shown below: Basically, the vast majority ofWordPress Themessearch page titles are in the form of „keyword + website title“ combination. However, this creates a problem: Baidu has a chance to crawl such pages. For example, one of Naiba„s sites unfortunately got exploited.

Basically, the vast majority ofWordPress Themessearch page titles are in the form of „keyword + website title“ combination. However, this creates a problem: Baidu has a chance to crawl such pages. For example, one of Naiba„s sites unfortunately got exploited. The last few rules are to prohibit specific search engines from crawling and a sitemap address link.Several Methods for Generating Sitemaps in WordPress_Recommended sitemap plugins

The last few rules are to prohibit specific search engines from crawling and a sitemap address link.Several Methods for Generating Sitemaps in WordPress_Recommended sitemap plugins

Comments are closed

The comment function for this article is closed. If you have any questions, please feel free to contact us through other channels.