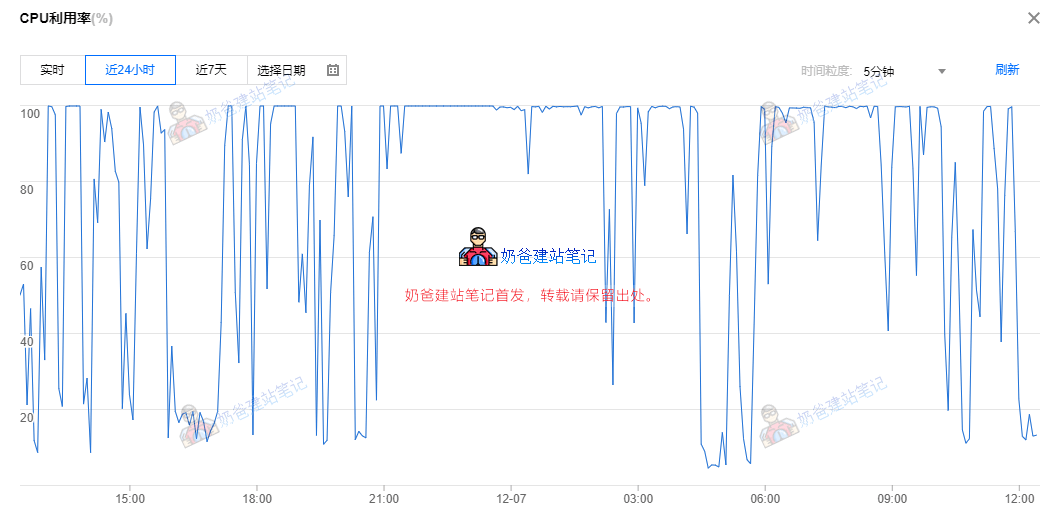

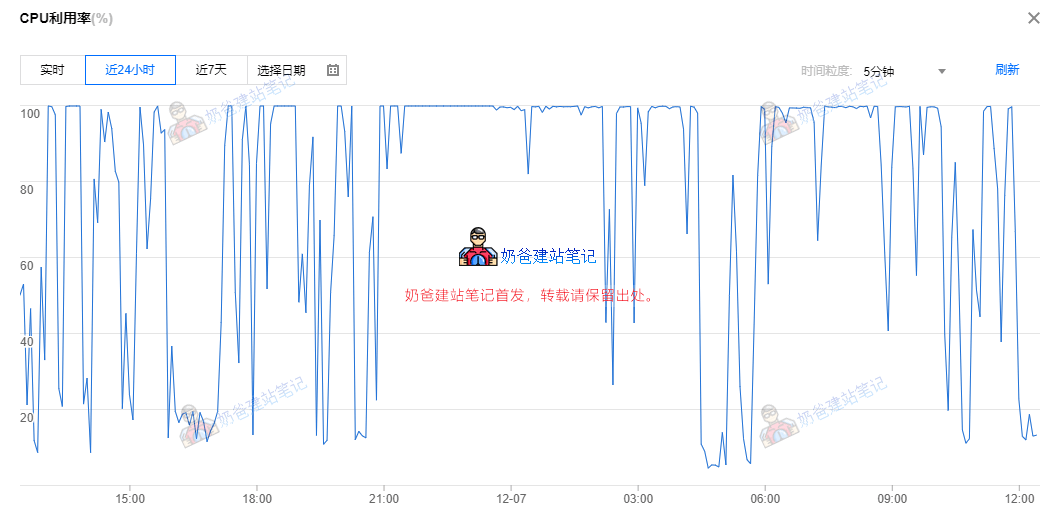

If you find your website server is too slow without a significant increase in website traffic, then you need to consider if something is wrong. Usually, if the website hasn't undergone upgrades or technical modifications, the problem lies externally, such as the website being scraped or being crawled excessively by search engines. Naiba recently checked the server monitoring information and found the server CPU usage was very high, as shown in the image below:

After analyzing the website logs, it was discovered that Bing's bot was crawling too frequently, so we need to resolve this.

The solution is to limit Bing's crawl frequency.。

Method 1: Set in the Admin Dashboard

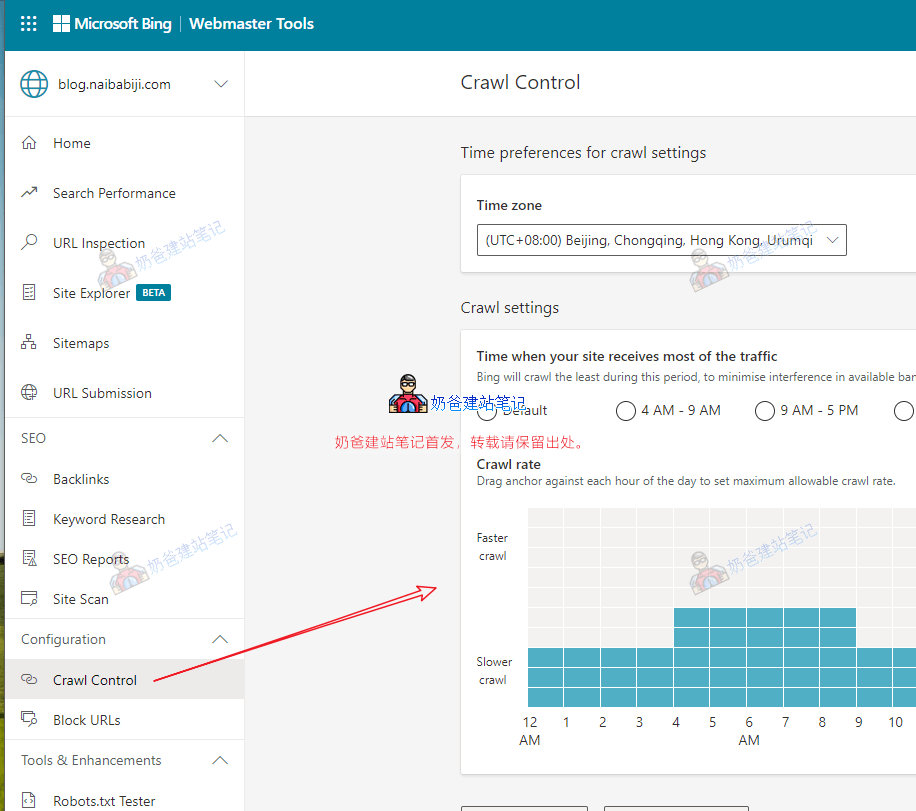

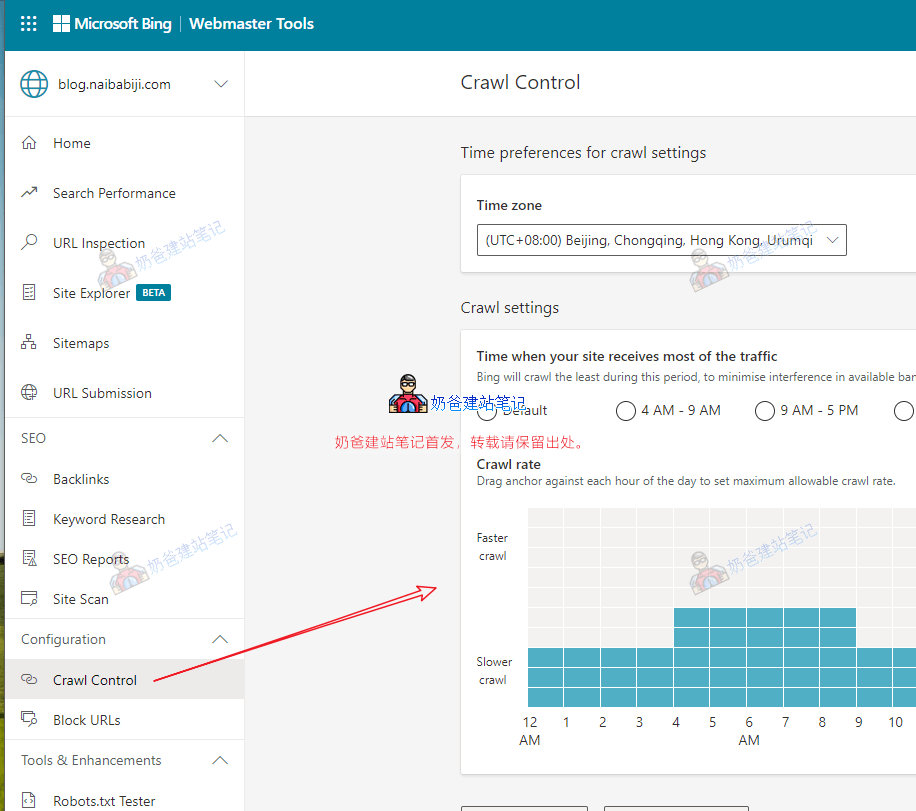

If you have registered for Bing Webmaster Tools, you can find the Crawl Control menu in the dashboard, then set the crawl frequency on the right side.

Method 2: Set via the robots.txt file

If you don't know what robots.txt is, please read this first:

What is robots.txt? The Correct WordPress robots.txt Writing Method and Generation ToolWe can add the `crawl-delay` parameter in the robots.txt file.

User-agent: *

Crawl-delay: 1

The code above means limiting the crawl frequency for all search engines to 'slow'. If `Crawl-delay` is not set, it means the search engine decides the crawl frequency itself. This value can be set to 1, 5, 10, corresponding to slow, very slow, and extremely slow respectively.

For other search engines, such as Google, Baidu, etc., you can set the crawl frequency in their respective webmaster tools, or via the robots.txt file. Relatively speaking, the robots.txt method takes effect more slowly. After analyzing the website logs, it was discovered that Bing's bot was crawling too frequently, so we need to resolve this.The solution is to limit Bing's crawl frequency.。

After analyzing the website logs, it was discovered that Bing's bot was crawling too frequently, so we need to resolve this.The solution is to limit Bing's crawl frequency.。 If you have registered for Bing Webmaster Tools, you can find the Crawl Control menu in the dashboard, then set the crawl frequency on the right side.

If you have registered for Bing Webmaster Tools, you can find the Crawl Control menu in the dashboard, then set the crawl frequency on the right side.

Comments are closed

The comment function for this article is closed. If you have any questions, please feel free to contact us through other channels.